AI you can feel good about.

Chat with the world's best AI models - all in one platform.

Models Available

Chat with the world's best AI models - all in one platform. From lightweight efficiency to powerful reasoning, find your perfect AI match for any conversation or project.

Powered by

AI Leaders

Access cutting-edge AI from 13 industry leaders. Switch between models instantly to find what works best for your unique needs.

A Platform You Can Trust

Privacy First

Wherever possible, your data is not used for training or shared with third parties outside of what is strictly required to provide these services.

Learn MoreNo Throttling

We don't rate limit users. Use as much as you want up to your credit total, and purchase additional credits at discounted rates when you need more.

Learn MoreAI for Good

This is a rapidly developing industry, and we want to be part of making sure AI is used responsibly, safely, and for the betterment of society.

Learn More

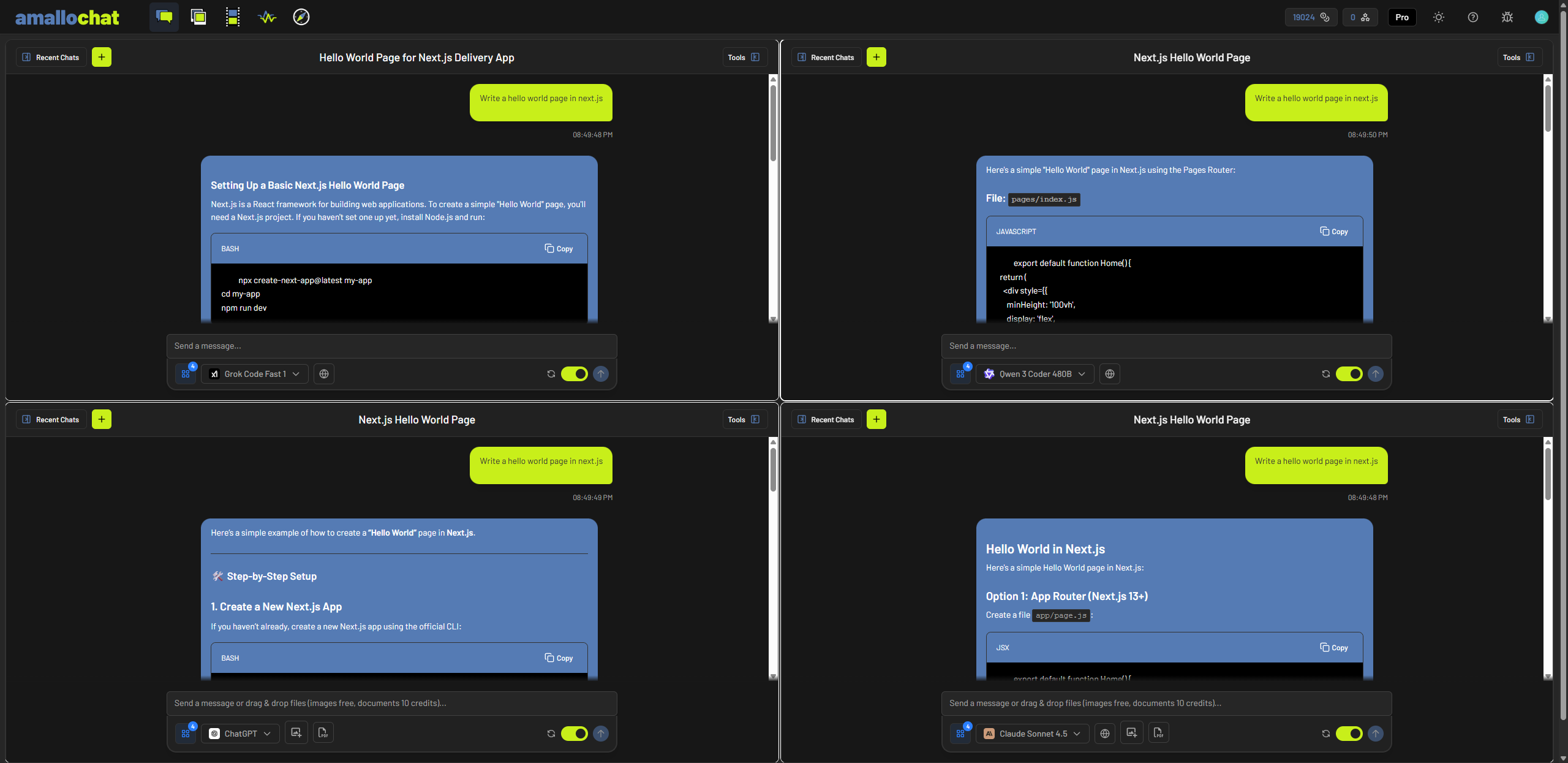

Split Screen

Chat with up to four windows at a time to compare models and responses.

Attachments

Upload images and files to provide the models context and gain valuable insights.

Web Search

Allow models to search the web for specific information, recent events, or anything else. Track sources used with inline citations and a citation list.

Set System Prompt

Set a custom prompt to determine how the AI model interacts with you. Change its behavior to best fit your personality or needs.

Models

Alibaba Qwen 3 14B

Alibaba Qwen 3 14B Alibaba Qwen 3 235B Thinking

Alibaba Qwen 3 235B Thinking Alibaba Qwen 3 30B A3B

Alibaba Qwen 3 30B A3B Alibaba Qwen 3 32B

Alibaba Qwen 3 32B Alibaba Qwen 3 Coder 480B

Alibaba Qwen 3 Coder 480B Alibaba Qwen 3 Next 80B Instruct

Alibaba Qwen 3 Next 80B Instruct Alibaba Qwen 3 Next 80B Thinking

Alibaba Qwen 3 Next 80B Thinking Alibaba Qwen 3.6 35B A3B

Alibaba Qwen 3.6 35B A3B Alibaba QwQ 32B

Alibaba QwQ 32B Anthropic Claude Haiku 4.5

Anthropic Claude Haiku 4.5 Anthropic Claude Opus 4.0

Anthropic Claude Opus 4.0 Anthropic Claude Opus 4.1

Anthropic Claude Opus 4.1 Anthropic Claude Opus 4.5

Anthropic Claude Opus 4.5 Anthropic Claude Opus 4.6

Anthropic Claude Opus 4.6 Anthropic Claude Opus 4.7

Anthropic Claude Opus 4.7 Anthropic Claude Sonnet 4.0

Anthropic Claude Sonnet 4.0 Anthropic Claude Sonnet 4.5

Anthropic Claude Sonnet 4.5 Anthropic Claude Sonnet 4.6

Anthropic Claude Sonnet 4.6 DeepSeek V4 Flash

DeepSeek V4 Flash DeepSeek V4 Pro

DeepSeek V4 Pro DeepSeek Prover V2 671B

DeepSeek Prover V2 671B DeepSeek R1

DeepSeek R1 DeepSeek R1 Distill Llama 70B

DeepSeek R1 Distill Llama 70B DeepSeek R1 Turbo

DeepSeek R1 Turbo DeepSeek R1-0528

DeepSeek R1-0528 DeepSeek R1-0528 Turbo

DeepSeek R1-0528 Turbo DeepSeek V3

DeepSeek V3 DeepSeek V3-0324

DeepSeek V3-0324 DeepSeek V3.1

DeepSeek V3.1 DeepSeek V3.1 Terminus

DeepSeek V3.1 Terminus DeepSeek V3.2

DeepSeek V3.2 DeepSeek V3.2 Exp

DeepSeek V3.2 Exp Google Gemini 2.5 Flash

Google Gemini 2.5 Flash Google Gemini 2.5 Flash Lite

Google Gemini 2.5 Flash Lite Google Gemini 2.5 Pro

Google Gemini 2.5 Pro Google Gemini 3 Flash

Google Gemini 3 Flash Google Gemini 3.1 Flash Lite

Google Gemini 3.1 Flash Lite Google Gemini 3.1 Pro

Google Gemini 3.1 Pro Google Gemma 3 12B IT

Google Gemma 3 12B IT Google Gemma 3 27B IT

Google Gemma 3 27B IT Google Gemma 3 4B IT

Google Gemma 3 4B IT Google Gemma 4 26B A4B Instruct

Google Gemma 4 26B A4B Instruct Google Gemma 4 31B Instruct

Google Gemma 4 31B Instruct Meta Llama 3.3 70B Instruct

Meta Llama 3.3 70B Instruct Meta Llama 3.3 70B Instruct Turbo

Meta Llama 3.3 70B Instruct Turbo Meta Llama 4 Maverick

Meta Llama 4 Maverick Meta Llama 4 Maverick Turbo

Meta Llama 4 Maverick Turbo Meta Llama 4 Scout

Meta Llama 4 Scout Microsoft Phi-4

Microsoft Phi-4 Microsoft Phi-4 Multimodal

Microsoft Phi-4 Multimodal Microsoft Phi-4 Reasoning Plus

Microsoft Phi-4 Reasoning Plus Mistral Devstral Small 2505

Mistral Devstral Small 2505 Mistral Small 3.2 24B Instruct

Mistral Small 3.2 24B Instruct Moonshot AI Kimi K2.5

Moonshot AI Kimi K2.5 NVIDIA Nemotron 3 Nano 30B

NVIDIA Nemotron 3 Nano 30B OpenAI ChatGPT 5

OpenAI ChatGPT 5 OpenAI ChatGPT 5.1

OpenAI ChatGPT 5.1 OpenAI GPT-4.1

OpenAI GPT-4.1 OpenAI GPT-4.1 Mini

OpenAI GPT-4.1 Mini OpenAI GPT-4.1 Nano

OpenAI GPT-4.1 Nano OpenAI GPT-4o

OpenAI GPT-4o OpenAI GPT-4o mini

OpenAI GPT-4o mini OpenAI GPT-4o Mini Search Preview

OpenAI GPT-4o Mini Search Preview OpenAI GPT-4o Search Preview

OpenAI GPT-4o Search Preview OpenAI GPT-5

OpenAI GPT-5 OpenAI GPT-5 Codex

OpenAI GPT-5 Codex OpenAI GPT-5 Mini

OpenAI GPT-5 Mini OpenAI GPT-5 Nano

OpenAI GPT-5 Nano OpenAI GPT-5 Pro

OpenAI GPT-5 Pro OpenAI GPT-5.1

OpenAI GPT-5.1 OpenAI GPT-5.1 Codex

OpenAI GPT-5.1 Codex OpenAI GPT-5.1 Codex Max

OpenAI GPT-5.1 Codex Max OpenAI GPT-5.1 Codex Mini

OpenAI GPT-5.1 Codex Mini OpenAI GPT-5.2

OpenAI GPT-5.2 OpenAI GPT-5.2 Pro

OpenAI GPT-5.2 Pro OpenAI GPT-5.4

OpenAI GPT-5.4 OpenAI GPT-5.4 Mini

OpenAI GPT-5.4 Mini OpenAI GPT-5.4 Nano

OpenAI GPT-5.4 Nano OpenAI GPT-5.5

OpenAI GPT-5.5 OpenAI GPT-OSS 120B

OpenAI GPT-OSS 120B OpenAI GPT-OSS 20B

OpenAI GPT-OSS 20B OpenAI O1

OpenAI O1 OpenAI O1 Pro

OpenAI O1 Pro OpenAI O3

OpenAI O3 OpenAI O3 Mini

OpenAI O3 Mini OpenAI O3 Pro

OpenAI O3 Pro OpenAI O4 Mini

OpenAI O4 Mini Perplexity Sonar

Perplexity Sonar Perplexity Sonar Pro

Perplexity Sonar Pro Perplexity Sonar Reasoning Pro

Perplexity Sonar Reasoning Pro xAI Grok 3

xAI Grok 3 xAI Grok 3 Fast

xAI Grok 3 Fast xAI Grok 3 Mini

xAI Grok 3 Mini xAI Grok 3 Mini Fast

xAI Grok 3 Mini Fast xAI Grok 4

xAI Grok 4 xAI Grok 4 Fast

xAI Grok 4 Fast xAI Grok 4 Fast Reasoning

xAI Grok 4 Fast Reasoning xAI Grok 4.1 Fast

xAI Grok 4.1 Fast xAI Grok 4.1 Fast Reasoning

xAI Grok 4.1 Fast Reasoning xAI Grok 4.20

xAI Grok 4.20 xAI Grok 4.20 Multi-Agent

xAI Grok 4.20 Multi-Agent xAI Grok 4.20 Reasoning

xAI Grok 4.20 Reasoning xAI Grok Code Fast 1

xAI Grok Code Fast 1 Z.ai GLM-4.5

Z.ai GLM-4.5 Z.ai GLM-4.5 Air

Z.ai GLM-4.5 Air Z.ai GLM-4.6

Z.ai GLM-4.6 Z.ai GLM-4.7 Flash

Z.ai GLM-4.7 Flash Z.ai GLM-5

Z.ai GLM-5Choose Your Plan

Select the plan that best fits your needs

Base

Perfect for getting started

- 4000 Credits per month

- 14 Day Money Back Guarantee

- AI Chat with 108 models

- and new models and features being added all the time!

Plus

Our bread and butter

- 12000 Credits per month

- 14 Day Money Back Guarantee

- AI Chat with 108 models

- 10% discount on credit purchases

- Unlimited use of Mistral Small for free

- BYOK (Bring Your Own Key) support

- and new models and features being added all the time!

Pro

Engines to overdrive

- 26000 Credits per month

- 14 Day Money Back Guarantee

- AI Chat with 108 models

- 15% discount on credit purchases

- Unlimited use of gpt-5-nano for free

- BYOK (Bring Your Own Key) support

- and new models and features being added all the time!